where dq is the total charge of one sign produced in air when all electrons liberated by photons in air of mass dm deposit all of their energy in the air. The classical unit of exposure is the Roentgen (R), which is equivalent to the production of 2.58 × 10–4 C kg–1 in dry air.

From a radiation safety standpoint, it is most convenient to focus on absorbed dose. By definition, the absorbed dose is equal to

where ε is the expectation value of the energy imparted in a finite volume V at a point P. The expectation value is appropriate, given that energy imparted by charged particles is fundamentally a stochastic process governed by laws of probability and subject to statistical uncertainty. A reasonable estimate of the mean of this process is only realized once enough events accumulate in V. The SI unit for dose is the Gray (Gy), where

![]()

Another quantity that accounts for the differences in radiation quality is known as the equivalent dose. Since only photons are used in diagnostic imaging, which has a radiation weighting factor of unity, it can be assumed that the absorbed dose is equal to the equivalent dose, which has units of sievert (Sv). Given that equivalent dose corresponds to the energy deposited in tissue, it is often the preferred quantity over exposure in diagnostic radiology.

Each tissue or organ in the human body responds differently to ionizing radiation. For the same absorbed dose, the probability of inducing a stochastic effect in one organ will be different from that in another. To account for these differences, tissue-weighting factors have been developed by the ICRP and NCRP. The product of the equivalent dose and the tissue-weighting factor gives a quantity that correlates to the overall detriment to the body from damage to the organ or tissue being irradiated. The detriment includes both mortality and morbidity risks associated with cancer and severe genetic effects. The sum of the tissue-weighting factors equals unity. The total risk for all stochastic effects in an irradiated individual is known as the effective dose, which is defined as

![]()

The unit of effective dose is the sievert (Sv). It is important to remember that the concept of effective dose was designed for radiation protection purposes. It reflects the total radiation detriment from an exposure averaged over all ages and both sexes. Effective dose is calculated in a reference computational phantom that is not representative of a single patient given that the phantom is androgynous and of an age representing the average age of a working adult. It should be noted that recent recommendations have called for a modified effective dose calculation procedure that uses sex-specific phantoms. Effective dose should never be used to predict risk to an individual patient, but only to compare potential risk between different exposures to ionizing radiation, such as computed tomography (CT) examinations. For example, effective dose can be used to compare relative risks, averaged over the population from different proposed CT protocols or scanners.

Given the large volume of CT scans performed each year and associated concerns about absorbed dose to the patient, various metrics have been developed to characterize dose from CT scans. The most fundamental radiation dose metric used for CT imaging is the Computed Tomography Dose Index (CTDI).17,18 The CTDIvol parameter is most commonly displayed on CTDI scanners. The CTDIvol is derived from measurements of the CTDI100, which is defined as

where n is the number of slices acquired during the scan, T is the width of each slice, and D(z) is the dose profile resulting from single axial rotation measured from a 10-cm-long ionization chamber. The chamber is placed either at the center or at the periphery of a polymethyl methacrylate dose phantom. Typically, the measurements are made in units of air kerma (K), where K (mGy) = 8.73 × X (R). There are two standard dose phantoms used to acquire the CTDI100: one has a 16-cm diameter and the other has a 32-cm diameter. Both of these phantoms have a length of 15 cm. Such measurements may be used to provide an indication of the average dose delivered over a single slice. The CTDIvol value is given as

where the pitch is defined as the ratio of the table feed (in mm) per 360° gantry rotation to the nominal beam width (nT), and CTDIw is given by

Several variables impact CTDIvol, including tube voltage, tube current, gantry rotation time, and pitch.

Another parameter that is often used to characterize dose from a CT scan is called the dose length product (DLP). The DLP is simply defined as

![]()

where the scan length is the product of the total number of scans and the scan width. Given that the intention of the DLP is to provide information about the total exposure over the entire volume of the scan, an approximation of the effective dose from the scan can be made using a DLP to effective dose conversion factor. The effective dose is given as

![]()

where k is the conversion factor (typically in units of mSv × mGy–1 × cm–1) that varies depending on the region of the body being scanned. The conversion factor k is derived from Monte Carlo calculations in a computation anthropomorphic phantom.

BIOLOGIC EFFECTS OF RADIATION

Biologic effects from ionizing radiation are generally classified as being stochastic or deterministic. Stochastic effects are injuries that manifest from the damage of one or only a few cells. Stochastic effects include hereditary effects and cancer. Deterministic effects result from damage to a large collection of cells, leading to damage to tissue or entire organs and systems in the body. Therefore, incidence and severity increase as a function of dose once a certain threshold for the effect to occur has been reached. Tissue reactions that are classified as deterministic effects include skin burns, hair loss, loss of thyroid function, and cataracts.

Cancer

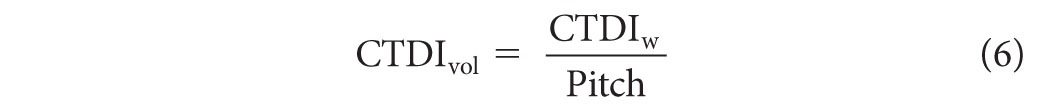

The most studied radiation-induced stochastic effect is cancer. Most of the data on radiation-induced cancer risks come from Japanese atomic bomb survivors through the Life Span Study (LSS), although other exposed populations have been studied as well, including patients receiving medical treatments, occupationally exposed groups, and environmentally exposed groups. Given these data, a clear linear relationship has been established between cancer induction and absorbed dose at high doses. Exceptions to this relationship have been found for leukemia and nonmelanoma skin cancer in atomic bomb survivor data and bone cancer in radium dial painters. It is also well known that the damage to a single cell or small number of cells can result in the induction of cancer even at very low doses. However, the exact relationship between the absorbed dose and the induction of a cancer in humans at low doses associated with diagnostic imaging procedures has been the subject of intense debate. For radiation protection purposes, it is generally assumed that a linear no-threshold (LNT) relationship exists between dose and effect, although evidence for a variety of other dose–effect relationships exists as illustrated in Figure 2.1. If the LNT holds for low doses, then a general rule of thumb for fatal cancer risk of 5% per sievert effective dose for a working adult has been proposed. For more detailed risk calculation methods, one should refer to the Biological Effects of Ionizing Radiation (BEIR) Report VII.20

FIG. 2.1 ● Illustration of different cancer risks versus dose relationships at low doses that have been derived from human and animal studies.19 Curve 1 is known as the linear no-threshold (LNT) model. In this model, it is assumed that the risk of cancer formation is directly proportional to the dose. Most recommending bodies related to radiation safety recommend the LNT model for solid cancer formation induced by low doses of ionizing radiation. Curve 2 is the linear-quadratic (LQ) model. There is significant evidence to support that the LQ model best represents radiation-induced leukemia risk. Several investigations in humans and animals have also demonstrated a hormesis effect as shown in curve 3, where below some dose threshold radiation exposures to humans have produced a positive benefit. Finally, supralinear responses as shown in curve 4 where hypersensitivity of risk is expected at low doses have also been documented.

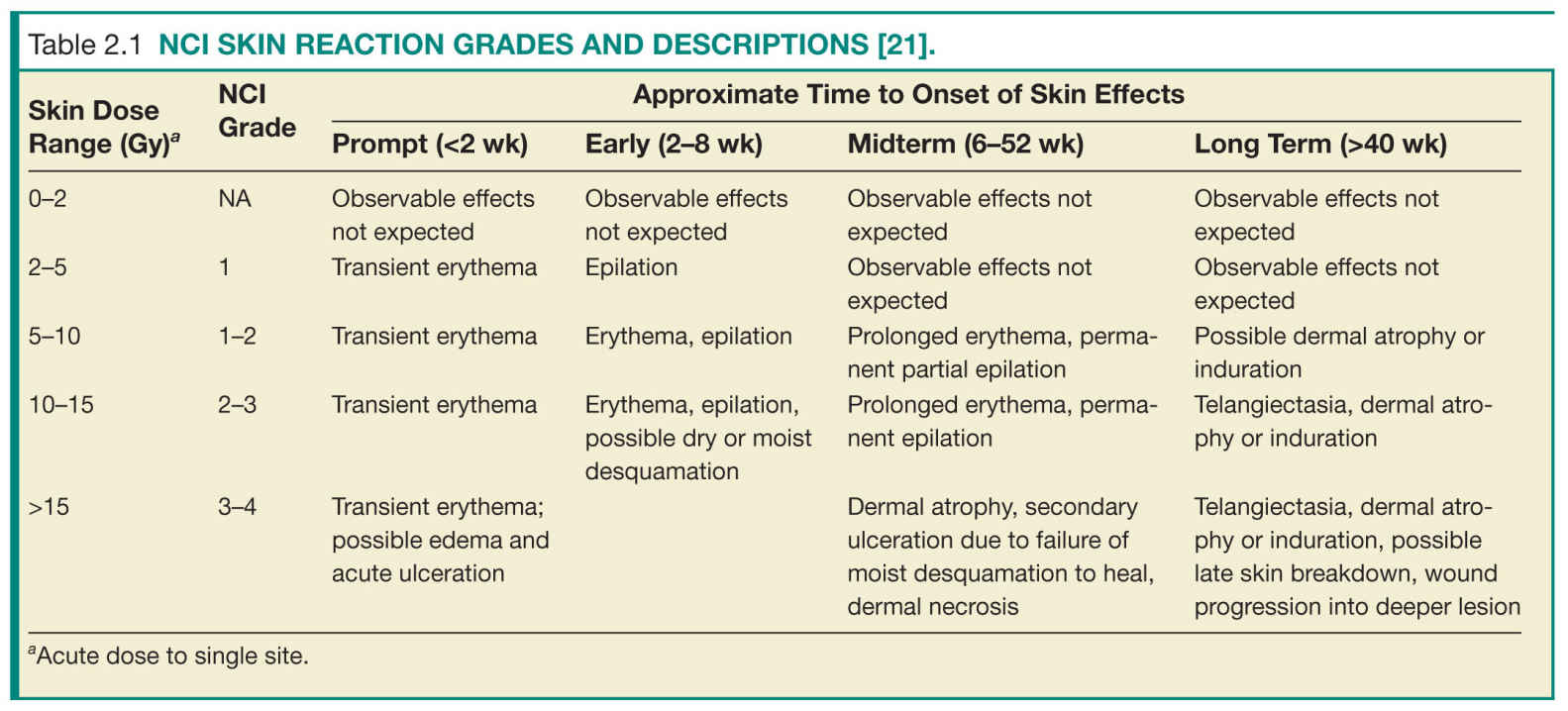

Skin Burns

Skin reactions from ionizing radiation have been well documented, particularly from external beam radiation therapy. Factors that impact the severity of the skin reaction include total dose, time interval between incremental exposures (known as dose fractionation), and size of the irradiated area on the patient. The most sensitive site on the patient is the anterior portion of the neck followed by the flexor portion of the extremities, the trunk, the back, the extensor surfaces of the extremities, the back of the neck, the scalp, and the palms of the hands and soles of the feet, in that order.21 Skin reactions include damage to the epidermis, dermis, and subcutaneous tissue. Skin reaction from diagnostic imaging can be classified by severity following the NCI Skin Reaction Grade, as shown in Table 2.1.21 This classification also considers the approximate time of onset of the effects. Prompt reactions occur within 2 weeks following exposure, early reactions occur 2 to 8 weeks after exposure, midterm reactions occur 6 to 52 weeks after exposure, and long-term reactions occur more than 40 weeks after exposure.

Cataracts

Severe adverse effects to the eye from radiation were reported within years after the discovery of X-rays,22 and cataract formation was one of the earliest effects observed among atomic bomb survivors.23,24 It is now well known that the subcapsular lens epithelium, particularly where it differentiates to lens fibers, is susceptible to radiation damage. The development of radiation-induced cataracts is dependent on radiation dose, dose rate, and age of the lens25 and is a known late effect from radiation exposure.26–29 While the current guidelines for the threshold dose of cataract formation ranges from 2 to 5 Gy, recent studies indicate that the dose could be less than 0.5 Gy on the basis of evidence from various exposure situations.30,31 There is also strong evidence that cataract risk is better described by an LNT model.30 While limited data are available on cataract formation resulting from diagnostic exposures, studies have indicated that very low doses can lead to cataract formation 25 years or more after exposure.32

Pacemakers

Although not a direct biologic effect in patients, radiation damage to pacemakers can lead to serious health implications. It is well known that pacemakers are sensitive to ionizing radiation. Most pacemakers use small electronic circuits known as complementary-metal-oxide semiconductor (CMOS) circuits to detect, using leads connected to the heart muscle, the electrical activity of the heart. If a deficient signal is detected, then the pacemaker will automatically stimulate heart pumping to return the heart to normal function. The electronic component responsible for detecting the electrical activity of the heart is the CMOS. Given a similar effect is induced in a CMOS by free electrons produced from X-ray interactions, the radiation dose deposited in a CMOS circuit can induce spurious signals that adversely affect pacemaker operation. It was once generally believed that the radiation doses received from diagnostic imaging were not high enough to induce deleterious effects in pacemakers, unlike in external beam radiation therapy where pacemaker dose is a significant concern.33 However, recent investigations found that adverse events in pacemaker operation can be experienced during CT imaging.34

PATIENT SAFETY

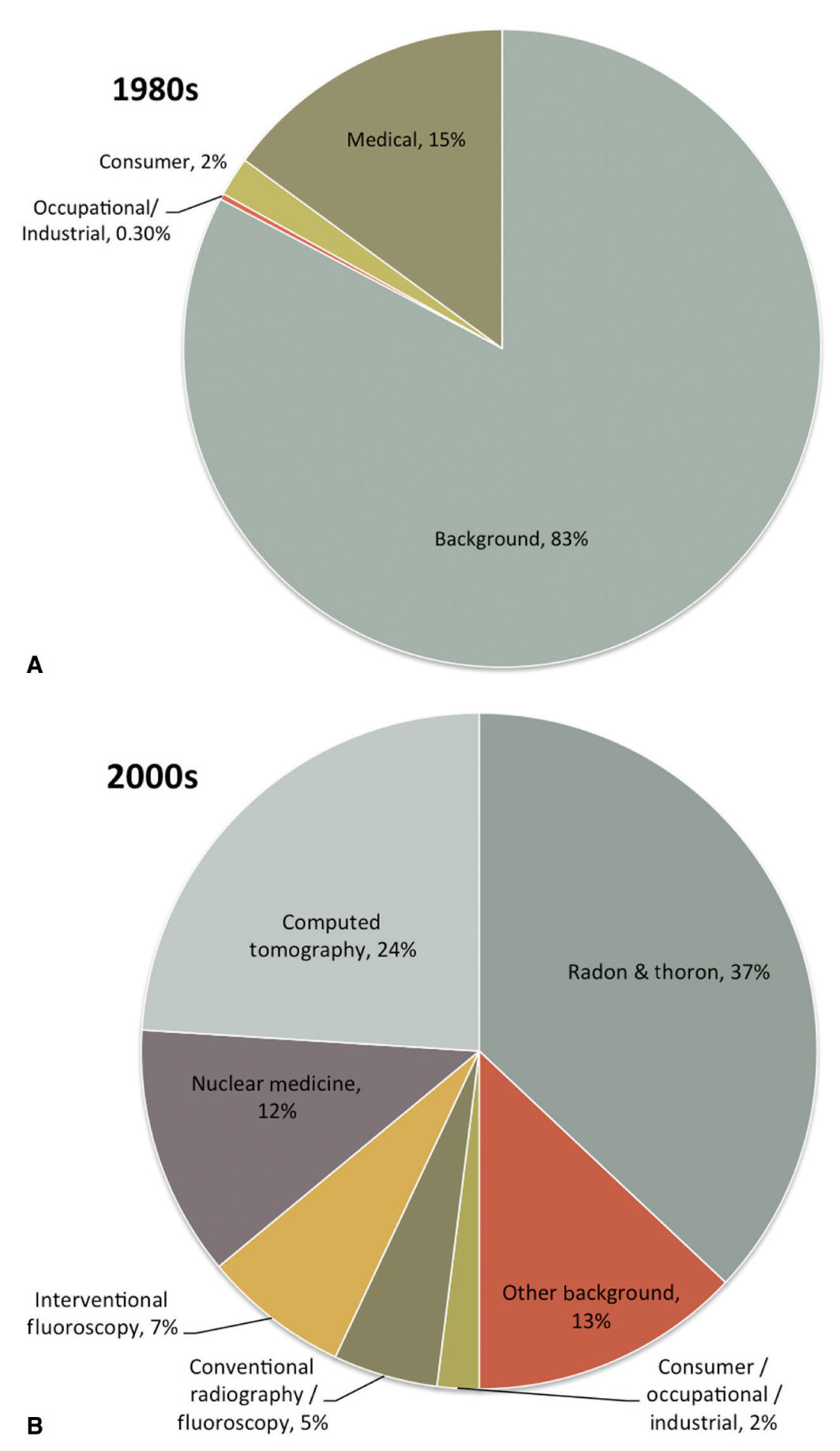

Diagnostic imaging continues to make a significant impact on the quality and effectiveness of health care in the United States and worldwide. As more physicians exploit diagnostic imaging for medical procedures and as patient access to advanced diagnostic imaging equipment has improved, the utilization of diagnostic imaging and, in particular, imaging that uses ionizing radiation, has dramatically increased in recent years. In fact, as of 2006, more than 48% of the annual amount of radiation exposure to an average member of the general public in the United States has been due to medical procedures.35 In contrast, only 15% of the annual exposure was due to medical procedures in the early 1980s.35 Figure 2.2 compares the various types and amounts of annual exposures to the average member of the general public in the United States between the early 1980s and 2006. Primarily due to the rapid rise in medical imaging use, the average annual effective dose to the U.S. population is now roughly 6.2 mSv, and probably higher, which is nearly double the average effective dose reported only two decades earlier.35

FIG. 2.2 ● Percentage contribution of various sources of exposure to the average individual in the United States in the (A) 1980s and (B) 2000s. (Data extracted from NCRP. Ionizing Radiation Exposure of the Population of the United States (Report No. 160). Bethesda, MD; 2009.)

Conventional Radiographic Examinations

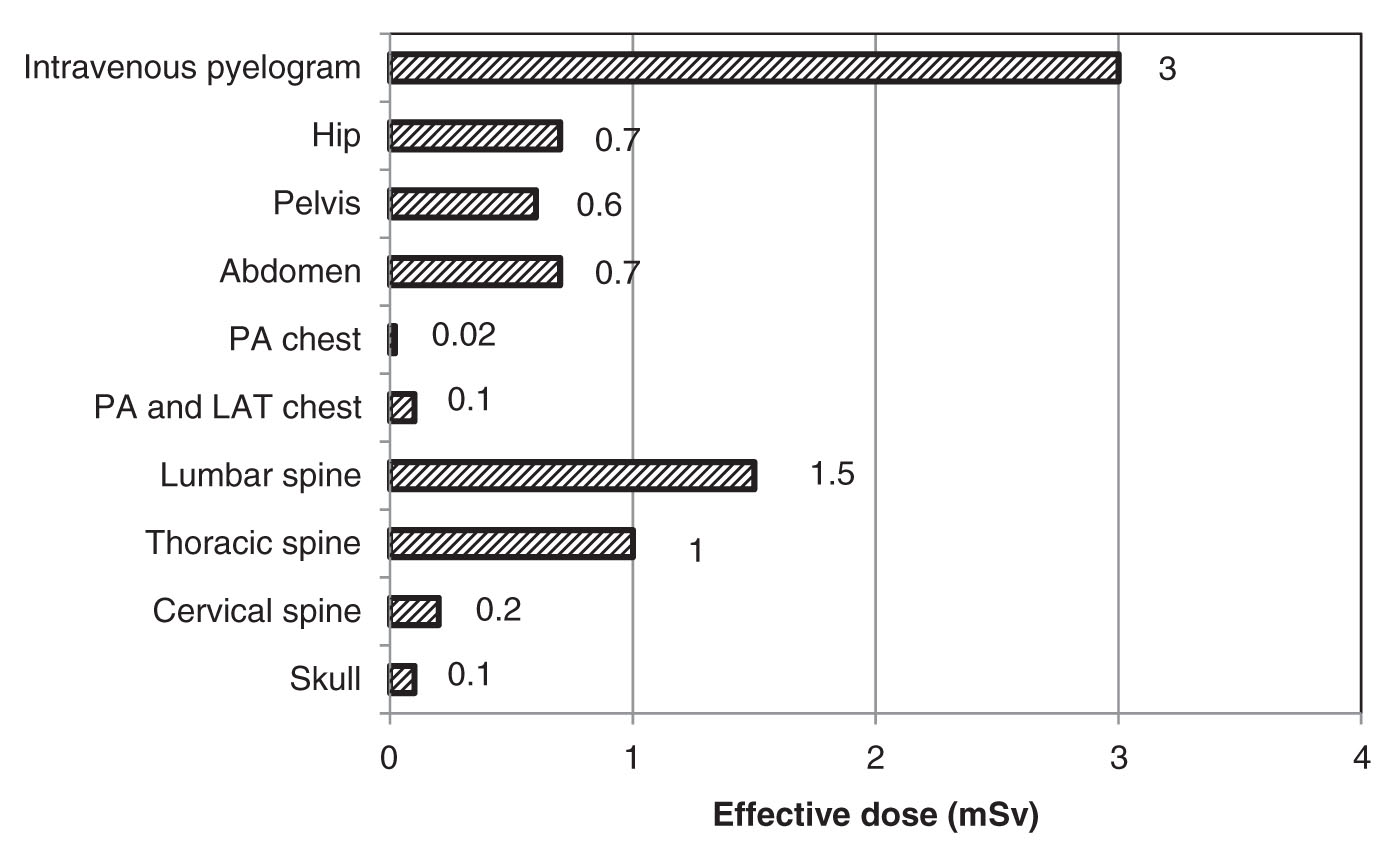

Conventional radiography comprises the largest number of X-ray examinations of patients in the United States. Nearly 300 million conventional scans are performed each year.35 However, the effective dose from conventional scans is substantially lower than other types of scans and as such conventional scans make up only a small percentage of the average annual effective dose to the population from diagnostic procedures. Typical conventional radiographic units include screen-film imaging receptors, computed radiography, digital radiography, direct x-ray film exposure, and any other type of X-ray imaging system that produces two-dimensional images. Figure 2.3 provides the average effective dose received from different conventional radiography scans.

Except in extremely rare instances, two-dimensional radiographic imaging delivers radiation doses that are far below the thresholds for inducing deterministic effects. Therefore, the main patient safety concern in conventional radiography is to limit the probability of inducing stochastic effects, most notably radiation-induced cancers. Because conventional radiography is the most common form of X-ray examination involving a large number of patients exposed, there is at least a potential for producing a number of stochastic effects in these patients even though individual radiation doses are low. Fortunately, chest radiographs are both the most common and lowest dose X-ray examinations, with about 130 million examinations at an average effective dose of approximately 0.1 mSv. Chest radiographs are a good example of a screening procedure that is low dose, inexpensive, and effective. One of the keys to limiting the number of adverse stochastic effects caused by screening procedures such as this is to ensure that each X-ray examination that is ordered has a clear medical benefit. This can be as simple as checking for previous images that may have recently been taken. Electronic medical records with shared access by different medical providers help to alleviate much of the wasted radiation exposure that has occurred in the past by unnecessarily repeated examinations.

Another way to limit the potential for stochastic effects from radiation exposure is to use the lowest possible dose for each image. Film-screen radiography has largely given way to digital methods, which have the potential to reduce patient dose for a number of reasons. Most digital image receptors have higher absorption efficiency for detecting ionizing radiation, so for the same image quality, fewer X-ray photons and thus a lower radiation dose can be used. In addition, film-screen systems produce good image quality over only a narrow range of radiation dose, which can lead to retakes simply caused by films that are too light or too dark. This does, however, force relatively tight control over the amount of dose that is used. In digital images, on the other hand, the brightness and contrast are freely selectable after the exposure independently of dose, eliminating one important cause of extra radiation dose associated with repeating exposures. Underexposure in a digital image results in increased noise whereas overexposure results in an image with less noise. Because changes in the level of noise are not nearly as apparent as the changes in image brightness and contrast, it is possible to produce images that are diagnostic with much more latitude in the amount of radiation used.

To avoid images that look too noisy, there is a tendency to use a higher-than-necessary radiation dose resulting in “exposure creep” over time.36 For preventing this, an exposure index is provided with the digital image that indicates the radiation dose to the image receptor. A systematic quality control program that monitors the exposure indices of images and incorporates feedback from the radiologists can be used to produce images with an acceptable level of noise for the diagnostic requirements of the imaging task while using a reasonably low radiation dose.

Computed Tomography

The data presented in Figure 2.1 represent a dramatic shift in the distribution of exposures due to the increased use of diagnostic imaging in medicine. Evidently, the largest contributor to this shift was the surge of CT procedures in the past couple of decades. The annual number of CT procedures in the United States increased from 18.3 million in 1993 to 60 million in 2006, during which the average increase in CT use was greater than 10% per year.35 This surge has been attributed to the advent of helical CT and multidetector CT scanners (MDCT) that have enabled rapid image acquisitions and a larger volume of patients to be scanned.35 With these technologies, it is now possible to scan the entire body of a patient in less than 30 s.

FIG. 2.3 ● The average effective dose from various conventional radiography scans. (Data extracted from NCRP. Ionizing Radiation Exposure of the Population of the United States (Report 160). Bethesda, MD; 2009.)

Given the large number of CT scans performed each year, a great deal of attention has been afforded to understating the factors that influence radiation dose from CT scans and developing ways to reduce this dose. CT scans require a higher dose than projection radiographs because many more images are produced during each procedure, thus providing much more information about the structures within the patient. A fundamental principle of all X-ray imaging is that more photons are required to produce images with more information whether it involves higher spatial resolution or better contrast resolution. The resolution of projection images is limited to only two dimensions, whereas CT images provide anatomical detail in all three dimensions. This greatly improves the visibility of low-contrast features by eliminating overlap in the images of structures that lie over one another, but does require more radiation dose.

The amount of dose a patient receives from a CT procedure typically depends on both scanner design factors and clinical protocol factors. The dose efficiency of a scanner is defined as the fraction of primary X-rays exiting the patient that contribute to the image.37 Obtaining maximum sensitivity from a scan for a given dose requires collecting as many primary photons that exit the patient as possible. The dose efficiency is a combination of the geometric and absorption efficiency of the detection system. The geometric efficiency is the fraction of exiting photons interacting with the active volumes of the detector. Loss in geometric efficiency is caused by absorption in the collimation and detector housing. As a result, there is a loss of geometric efficiency when going from a single slice scanner to a multislice scanner as a result of an increase in “dead space” between detectors in these systems. In addition, the beam penumbra in multislice CT scanners is removed to avoid field nonuniformities in the detectors. The absorption efficiency is the fraction of those photons that are collected in the active volume of the detectors. The detector material can influence the absorption efficiency. Therefore, dose reduction can be achieved with improvements in scanner design factors.

Stay updated, free articles. Join our Telegram channel

Full access? Get Clinical Tree